Introduction: The Convergence of Sensor Technologies

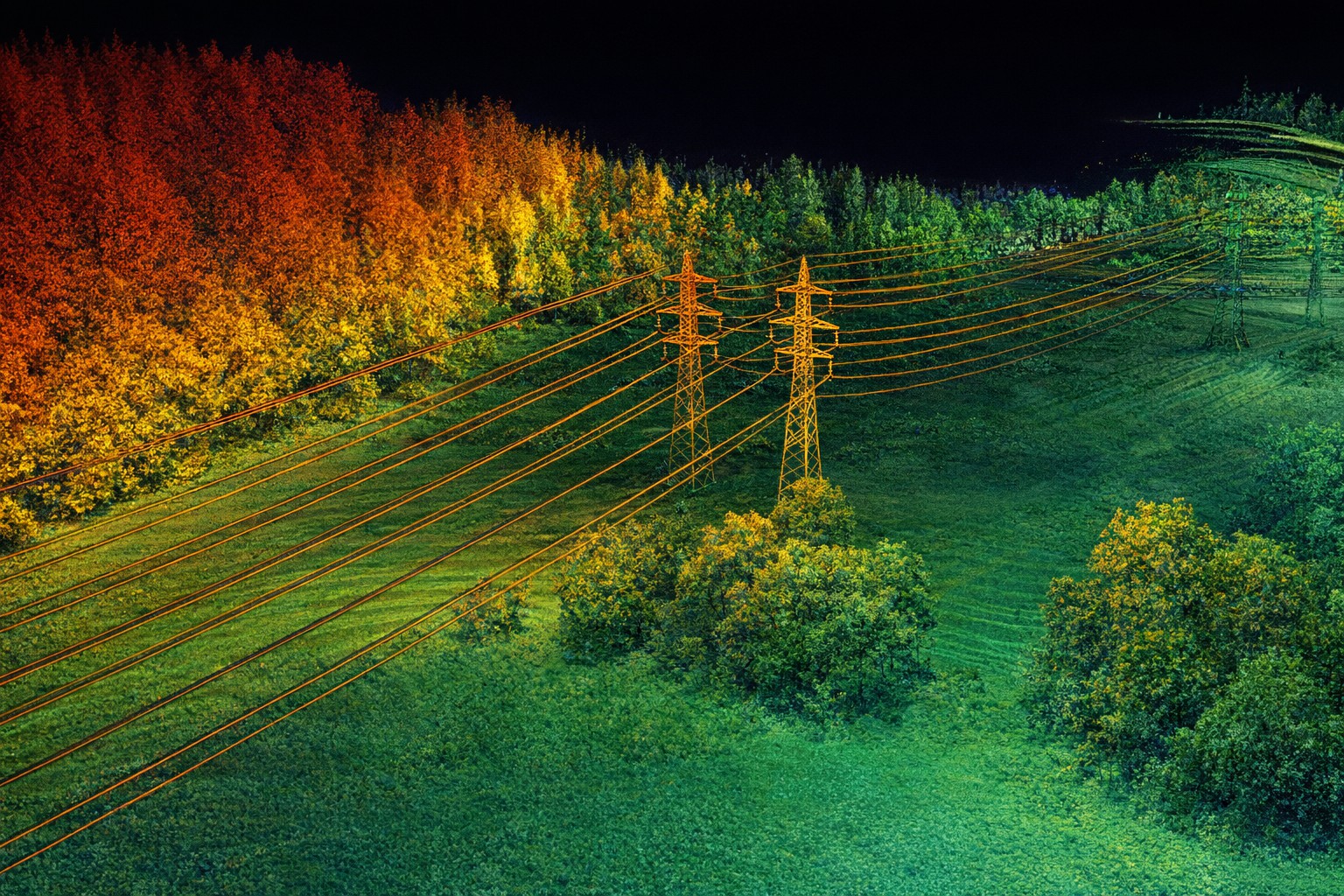

The future of autonomous perception isn't LiDAR vs. Camera-it's LiDAR + Camera. Forward-thinking companies across autonomous driving, robotics, and spatial computing are rapidly converging on multi-modal sensor fusion stacks. This convergence represents a fundamental shift in how AI perceives the world, and it's creating massive demand for specialized annotation talent.

Why Sensor Fusion is the New Standard

LiDAR and camera data complement each other in profound ways:

- LiDAR Strengths: Precise 3D geometry, range measurement, adverse weather performance, night vision capability

- Camera Strengths: Color information, semantic context, long-range detection, computational efficiency

- Fusion Benefits: Improved robustness, extended detection range, better semantic understanding, redundancy and safety

The result: perception systems that are more robust, more intelligent, and more reliable than any single modality alone.

The Technical Challenges of Multi-Modal Annotation

1. Temporal Synchronization

LiDAR and camera data arrive on different timing schedules. Accurate fusion annotation requires precise temporal alignment-typically synchronized to within 10-50ms. Annotators must understand sensor timing, frame rates, and latency characteristics to correctly label synchronized multi-modal data.

2. Spatial Calibration

Every LiDAR-Camera pair has a specific spatial relationship: extrinsic calibration parameters determine the rotation and translation between sensors. Annotation quality depends critically on understanding this calibration. Errors in calibration propagate directly into annotation errors.

3. Occlusion Handling

When the camera can't see an object but LiDAR detects it (or vice versa), how should it be labeled? These multi-modal occlusion scenarios require explicit protocols. Should annotations exist in both modalities regardless of visibility? How should confidence scores differ? Inconsistent handling creates subtle but damaging model degradation.

4. Cross-Modal Consistency

Objects must be labeled consistently across modalities. An object labeled at position (x, y, z) in LiDAR space must correspond exactly to the same object in camera space when projected. This cross-modal verification is computationally intensive but critically important.

Best Practices for Multi-Modal Annotation

Unified Reference Frames

Always annotate in a unified reference frame-typically the vehicle frame. All sensor data gets projected into this common frame before annotation begins, eliminating ambiguity about which modality is authoritative.

Synchronized Visualization

Provide annotators with side-by-side or overlaid visualization of all modalities. Modern annotation platforms should display LiDAR point clouds with camera images overlaid, showing exactly how projections align. This dramatically improves annotation quality and catch obvious errors.

Automated Cross-Modal Validation

Implement machine learning systems that automatically verify cross-modal consistency. Compare LiDAR-derived 3D positions against camera detections. Flag inconsistencies for human review. This ML-assisted QA catches the bulk of errors before human review.

Sensor-Specific Protocols

Document explicit protocols for each sensor type's limitations:

- How to handle reflective surfaces in LiDAR?

- How to label lens artifacts or motion blur in camera footage?

- What's the minimum confidence threshold for including an annotation?

Technology Stack for Multi-Modal Annotation

Specialized tools are emerging for multi-modal annotation:

- Nutonomy Scalability Frameworks: Built-in support for multi-sensor data

- Lyft Level 5 Dataset Tooling: Purpose-built for LiDAR-camera fusion workflows

- Custom Platforms: Companies like Tesla and Waymo maintain proprietary tooling optimized for their sensor stacks

- Emerging Solutions: New companies like Scale AI and Supervisely are rapidly adding multi-modal capabilities

Market Implications & Growth Opportunities

Multi-modal sensor fusion is unlocking massive growth in autonomous systems:

- Market Size: Autonomous vehicle perception testing market expected to exceed $15B by 2030

- Data Volume: A single autonomous vehicle generates 50-70GB of sensor data daily-the total addressable market for annotation services is enormous

- Specialized Talent Premium: Annotators with multi-modal fusion expertise command 30-50% salary premiums over single-modality specialists

- Competitive Differentiation: Companies mastering multi-modal annotation will lead in perception model performance

Real-World Applications Driving Growth

Multi-modal fusion is delivering breakthrough results across industries:

- Autonomous Vehicles: Tesla, Waymo, and Cruise are all converging on multi-modal stacks for Level 3+ autonomy

- Robotics: Household and industrial robots require multi-modal perception for safe human interaction

- Smart City Infrastructure: Traffic monitoring, public safety, and infrastructure inspection rely on fused perception data

- Augmented Reality: Next-generation AR systems require precise spatial understanding enabled by sensor fusion

Future Outlook: What's Next?

The evolution continues toward even richer sensor fusions:

- Thermal + LiDAR + Camera: Adding thermal imaging for advanced night vision and thermal anomaly detection

- Radar + LiDAR + Camera: Combining radar's doppler velocity information with LiDAR precision and camera semantics

- Event Cameras: Emerging event-based camera technology offers extreme temporal resolution, enabling new fusion possibilities

Conclusion: The Multi-Modal Imperative

Companies that master multi-modal LiDAR-camera fusion today will dominate autonomous perception for the next decade. The technical challenges are significant, but the competitive advantages are enormous. As the industry continues its rapid evolution, demand for specialized multi-modal annotation expertise will only intensify.

Is your project ready for multi-modal annotation? Kinetic LiDAR Labs specializes in LiDAR-camera fusion workflows. Let's discuss how we can accelerate your perception system development.