Everything you need to know about our annotation services, data handling, quality assurance, and project timelines for LiDAR and general datasets.

What types of LiDAR sensors do you annotate?

We handle all major LiDAR sensor types including Velodyne, Livox, Ouster, Hesai, RoboSense, and automotive-grade sensors. Whether you're working with 16-channel, 64-channel, 128-channel, or solid-state LiDAR, we have the expertise and tools to annotate your data accurately.

How long does LiDAR annotation take?

Project timeline depends on dataset size, complexity, and annotation requirements. Simple object detection projects typically take 2-4 weeks. Complex tracking and fusion projects may take 4-12 weeks. We provide detailed timelines during the quote phase and maintain on-time delivery with our agile scaling approach.

How do you ensure annotation quality and accuracy?

We employ a multi-layer QA process with frame-level verification, consistency checks, and expert audits. Our trained annotators have domain expertise in autonomous vehicles, robotics, and perception systems. We measure and report accuracy metrics (precision, recall, F1-score) and maintain 99%+ accuracy standards.

What data formats do you support?

We support all major formats: KITTI, nuScenes, Argoverse, Lyft, Waymo, custom formats, and more. We can ingest various input formats and deliver in your preferred output format. Data format expertise includes coordin...ate systems, point cloud representations, and sensor fusion specifications.

How is my data protected during annotation?

Your data security is paramount. We use encrypted transfer, secure storage, NDA-signed teams, and compliance-ready infrastructure. We follow industry-standard data protection protocols and can accommodate custom security requirements for sensitive automotive or autonomous vehicle projects.

Can you handle proprietary or custom sensor data?

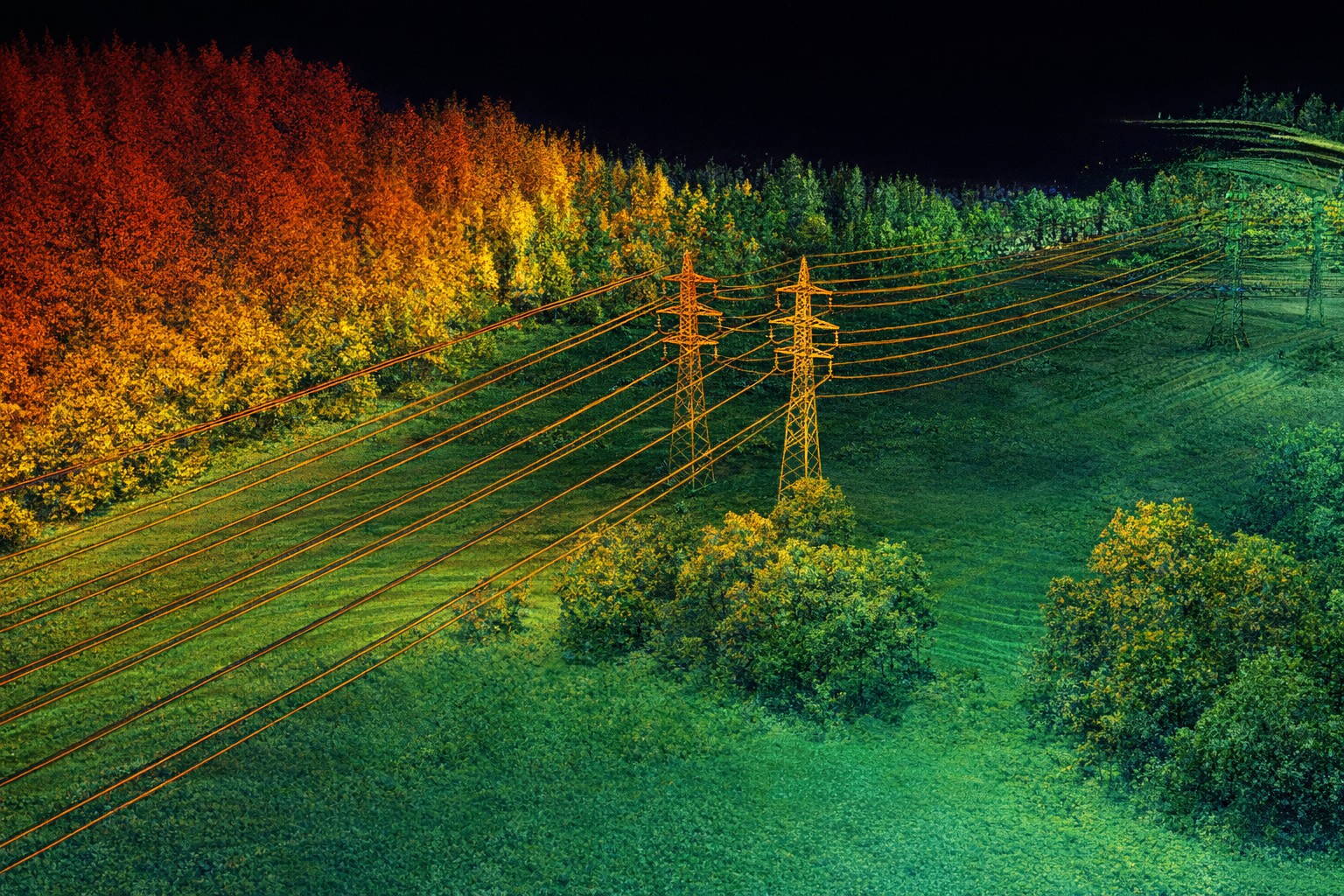

Yes! We work with custom sensors, unique vehicle configurations, and proprietary data formats. Our experience spans automotive, robotics, surveying, and GIS applications. We'll work with your technical team to develop annotation specifications tailored to your sensor data characteristics.

Do you offer revisions and iterations?

Absolutely. We provide revision rounds for quality improvement, feedback incorporation, and specification refinement. Our end-to-end partnership approach means we work closely with you until the annotated dataset meets your exact requirements for training your AI models.

What's your pricing model?

Pricing is project-specific based on dataset size, annotation complexity (simple vs. complex labeling tasks), turnaround time requirements, and your specific annotation needs. We offer flexible pricing including per-frame rates, project-based packages, and ongoing support agreements. Request a custom quote.

Do you annotate datasets beyond LiDAR?

Yes! While LiDAR annotation is our core expertise, we deliver comprehensive annotation services for vision datasets (object detection, segmentation, tracking), medical imaging, NLP text labeling, time-series data, and more. Our annotation specialists and QA teams can handle diverse data modalities. Contact us to discuss your specific dataset type.

What vision annotation services do you offer?

We provide 2D image annotation including bounding boxes, polygon segmentation, keypoint detection, instance segmentation, and panoptic segmentation. We handle diverse vision tasks for autonomous driving, robotics, drone footage, surveillance, and general computer vision projects. Our work meets automotive and industrial quality standards.

Can you handle multi-modal datasets combining LiDAR, camera, and other sensors?

Absolutely. We specialize in multi-modal annotation integrating LiDAR, camera, radar, and other sensor data. We ensure cross-modal consistency, time-alignment, and synchronized labeling across all data streams for comprehensive perception system training.

What industries do you serve?

We service automotive (autonomous vehicles), robotics, autonomous systems, medical imaging, geospatial/GIS, drone applications, smart city infrastructure, and general computer vision/ML projects. Our annotation expertise spans industries requiring precise, production-ready labeled datasets.